Every System I Built Was Designed for the Day I Left

The processes, architectures, and organizational investments I made so that every production system I built at a mid-size brokerage would survive without me.

TL;DR — Over six years as the sole product and technical lead at a mid-size Brazilian brokerage (80+ realtors, 2,000 listings), I built production systems spanning data quality, video automation, web acquisition, and campaign delivery. Each was designed for organizational ownership from day one. When I returned 18 months after leaving, most were running independently — and the one that wasn’t taught me the most.

The Brokerage Had No Technology Function

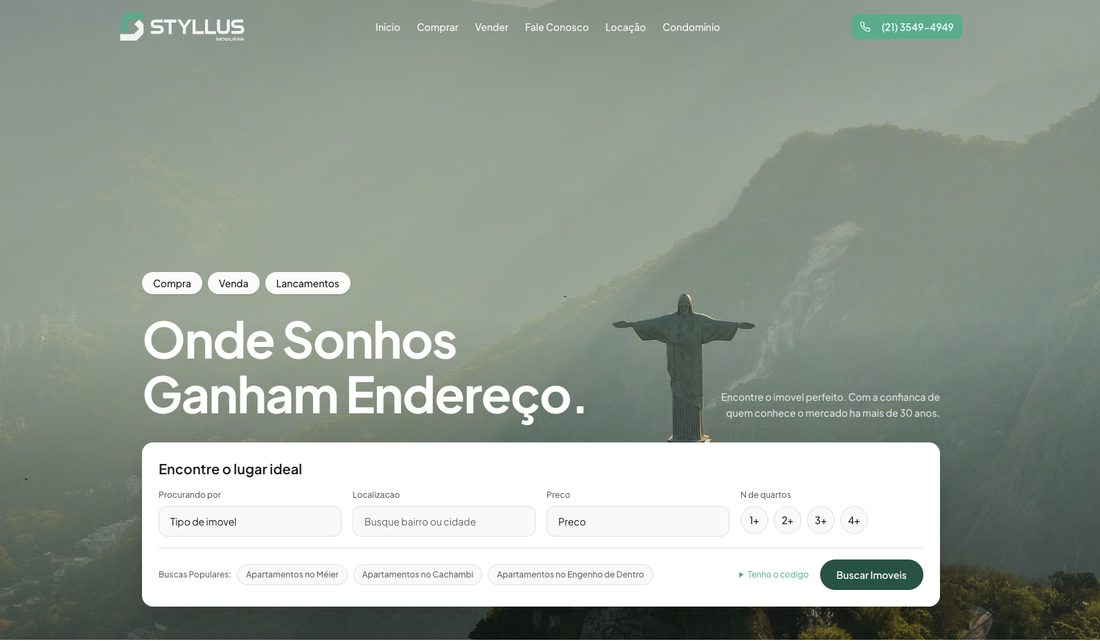

In January 2020, Styllus Imobiliaria had 80+ realtors, five stores, and zero custom technology. Everything ran on vendor templates and manual processes — listing syndication through a third-party portal manager, video editing outsourced to an agency, landing pages on Unbounce, the website on a vendor template. The technology that powered the business was rented, not owned.

I was brought in not to fill a gap in an existing org chart, but to create a technology function where none existed. The scale was real: 2,000 active listings, 4,000+ syndicated across portals, 150,000+ monthly landing page views. This was not a garage operation experimenting with tooling. It was a mid-size brokerage that needed systems matching its ambition.

Over the years that followed, I built systems that created measurable business value. But the deeper work — the work this post is actually about — was engineering those systems, and the organization around them, so the value would compound after I left. Every architecture decision, every training investment, every documentation artifact was shaped by a single question: what happens when I’m not here?

What I Built and Who Runs It Now

Each system addressed a distinct business problem with its own operator. Isolated systems were a deliberate choice: for an organization without engineers, if one breaks, the others keep running.

flowchart TD

ERP["Property Management System<br>(ERP)"] --> DP["Data Pipeline<br>2,000 listings at 10/10 quality"]

ERP --> VR["Video Render Farm<br>$0.02/video, full-catalog coverage"]

DP --> WS["Brokerage Website<br>2,000 listing pages, SSG on edge"]

DP --> LP["Landing Page Platform<br>Sub-500KB pages, edge-deployed"]

VR --> WS

DOC["Documentation Portal<br>Architecture, runbooks, onboarding"] -.-> DP

DOC -.-> VR

DOC -.-> LP

DOC -.-> WS

| System | Business Impact | Who Operates It Today |

|---|---|---|

| Data Pipeline | 2,000 listings at 10/10 quality, +35% conversion | Operations coordinators |

| Video Render Farm | $0.02/video vs $25, 100+ videos/month | Receptionists |

| Landing Page Platform | +45% conversion, -20% CPL | Marketing coordinators |

| Brokerage Website | +35% organic traffic, -96% page weight | Outsourced developers |

| Documentation Portal | Onboarding for all systems | Self-service |

Every row in that table has a non-Lucas operator. That was not an accident.

Architectural Foresight: Designing for Ownership

Three cross-cutting principles explain why these systems survived without me.

flowchart LR

P1["Eliminate Operational<br>Surface Area"] --> I1["Static files on edge<br>(no servers to monitor)"]

P1 --> I2["Ephemeral containers<br>(self-terminate after render)"]

P1 --> I3["Build-time validation<br>(fails build, not production)"]

P2["Configurable Rules<br>Over Hardcoded Logic"] --> I4["22 toggleable rules<br>via dashboard"]

P2 --> I5["YAML frontmatter<br>as config layer"]

P3["Build-Time Validation<br>Over Runtime QA"] --> I6["Zod schema catches<br>malformed pages before deploy"]

P3 --> I7["Pipeline flags unfixable<br>listings for human review"]

Eliminate operational surface area. The website and landing pages are static files served from Cloudflare’s edge — no servers to monitor, no databases to babysit, no runtime dependencies to patch. The video render farm uses ephemeral containers that spin up, process a queue, and self-terminate — no idle infrastructure accumulating cost or requiring maintenance. The design goal: if nobody touches the system for six months, it keeps running.

Configurable rules engines over hardcoded logic. The data pipeline’s 22 business rules are individually toggleable through a dashboard, without code changes. Portal scoring criteria shift; the operations team adjusts in hours, not sprint cycles. The landing page platform uses YAML frontmatter as a configuration layer — tracking pixels, form fields, A/B variants, all declared in the same file that holds the content. Same principle: the people closest to the work can adjust the system without waiting on engineering.

Build-time validation instead of runtime QA. The landing page platform validates every page against a Zod schema at build time — a malformed page fails the build rather than deploying broken. The data pipeline flags listings it cannot auto-correct for human review instead of silently skipping them. These choices push failure left, where it is cheap and visible, instead of right, where it reaches production and users.

These principles were not adopted because I planned to leave. They were adopted because this is how you build for organizations without dedicated engineering teams. A brokerage with 80+ realtors and no engineers needs systems that are operationally simple by design. My departure for an MBA created a hard deadline that tested the design — but the design preceded the departure.

Building the Capability, Not Just the Software

Software that only one person can operate is not an asset. It is a liability with a countdown timer. The real deliverable was organizational capability.

Operations coordinators learned to run the data pipeline. I trained two coordinators on scheduling, rule configuration, and exception handling. They now adjust the 22 business rules independently when portal criteria change — the configurable dashboard was designed specifically so this would not require a developer.

Receptionists learned to operate the video render farm end-to-end. The front desk manages the full lifecycle: receiving uploads from realtors, batching jobs, reviewing rendered output side-by-side against raw footage, and approving for YouTube publication. The approval workflow was a deliberate trust-building mechanism — it let the team gain confidence in machine-generated output before moving toward full automation.

Marketing coordinators learned to launch landing pages independently. Copy a markdown file, edit eight frontmatter fields, upload images to Cloudflare R2, push to GitHub. Build-time schema validation replaces the multi-day QA cycle they had with Unbounce. I walked the first coordinators through their initial pages field by field, published full documentation to the internal portal, and trained the external marketing agency so they could manage campaigns without my involvement.

Sixty realtors learned structured data entry. This was the highest-leverage training I ran. I did not teach realtors “how to use the pipeline” — I taught them how to provide complete data the pipeline could optimize. The distinction matters. The pipeline could auto-correct predictable errors, but it could not invent data that was never entered. Training realtors to fill in every field in the property management system — condo fees, IPTU, full addresses, complete photo sets — was the upstream fix that made everything downstream work better.

Selecting and onboarding external developers was the closest proxy for how I would build a team at any company. For the website migration, I did not hire hands to execute tickets. I hired developers who would own the system after I left. The criteria: familiarity with the Astro and Cloudflare stack so ramp-up would not consume the sprint, and communication skills with a non-technical client so the brokerage could talk to them directly — not through me as intermediary.

The PR-based workflow was designed specifically for autonomous operation: every change has a description, a review trail, and a deployment preview. Six months later, when a developer asks “why did we build the sitemap this way,” the answer lives in the pull request, not in anyone’s memory. I supplemented this with an internal documentation portal covering architecture decisions, deployment procedures, and integration points. The maintenance contract I structured works precisely because everything underneath it was designed for independence first. A maintenance contract on a fragile, undocumented system is a lifeline. A maintenance contract on a static site with clear documentation and autonomous developers is a growth engine.

| System | Complexity | Handoff Strategy | What I’d Change |

|---|---|---|---|

| Data Pipeline | Medium | Trained coordinators + dashboard + docs | — (held up well) |

| Video Render Farm | Medium | Trained receptionists + approval workflow + docs | Add infrastructure runbooks earlier |

| Landing Page Platform | Low | Trained coordinators + schema validation + agency walkthrough | — (cleanest handoff) |

| Brokerage Website | Higher | External devs + PR workflow + maintenance contract | — (PR workflow proved itself) |

| Documentation Portal | Self-service | Training Director owns content | — |

The goal was to make my institutional knowledge searchable, not indispensable.

What the Technology Investments Meant for the Business

Each system changed what was economically rational for the brokerage. The insight is not “we saved money.” The insight is: capabilities that did not exist before became the default.

Full-catalog video was not a cost reduction. It was a new capability. Before the render farm, video coverage was artificially scarce — $20-25 per video meant only premium listings got one. At $0.02 per video, every listing gets a video, same-day. No competitor in the market had full-catalog video coverage. The math made it possible; the strategic value is the capability itself.

The landing page platform was a competitive positioning move. In a market where every brokerage runs the same SaaS landing pages, the brokerage now serves pages that cost their users less data on mobile plans where data is scarce. Nobody else in the lancamentos market had framed bandwidth as an architecture problem. That is a product insight, not an infrastructure choice.

The data pipeline gave every listing a perfect quality score — not through better realtors, but through better systems. The same inventory, processed through 22 automated rules, produced +35% conversion and +40% CTR. Revenue metrics earned from existing assets.

Compounding effects matter. The $12,600 in former Unbounce costs was redirected into ad spend behind pages that now convert 45% better. Better pages and more budget behind them. The website migration created an organic acquisition channel that did not previously exist — +35% organic traffic is net-new pipeline feeding the same sales team.

Over time, these systems shifted the brokerage from a company that bought technology services to a company that owned technology capabilities. That shift changed its competitive position.

What Held Up and What Didn’t

I returned in December 2025 for a consulting project — a new landing page platform the brokerage needed for their real estate launch campaigns. But building inside the same organization where my prior systems had been running for 18 months gave me something rare: honest data on what survives the departure of its creator.

What held up:

The data pipeline was the strongest survivor. The operations team had adjusted rules independently multiple times as portal criteria evolved. The configurable dashboard was the right abstraction — it gave non-technical operators real control without exposing system internals. The pipeline was doing exactly what it was designed to do, operated by exactly who it was designed for.

The documentation portal had become an organizational default. New employees onboarded through it. The external developers referenced it for architecture context. It was not gathering dust — it was load-bearing.

The website’s PR-based workflow was functioning as designed. The maintenance contract with the development team had produced regular updates. The PR trail I had set up made it possible for developers to understand past decisions without asking anyone. Static files on Cloudflare’s edge meant the site had required zero infrastructure intervention since launch.

What didn’t hold up as well:

The video render farm needed more infrastructure-level documentation. The system itself was sound — it rendered videos reliably. But when something broke at the infrastructure layer, the team could not diagnose independently. I had documented what the system does but not enough about what to do when it doesn’t. Operational runbooks for infrastructure failure modes were insufficient.

This was an honest reflection of my own growth. The earlier systems reflected a less disciplined approach to documentation than the later ones. By the time I built the landing page platform, I was writing operational runbooks from day one — a practice I developed precisely because the video render farm taught me what happens when you don’t.

The initiative that failed outright. Not every project made it to production. Shortly after OpenAI released the ChatGPT API, I built a prototype for realtors to draft initial listing descriptions using structured prompts — property attributes, neighborhood context, and selling points fed into a template that generated a first draft. I was genuinely excited about the potential. Initial adoption peaked at around 15%, which was modest but enough to test the concept. Then it declined further. The brokerage’s sales teams are gregarious — peer behavior drives adoption more than feature quality, and once the early adopters stopped using it visibly, the rest never started. I pulled it back, redesigned the approach with sales managers, and arrived at a fundamentally different insight: the best automation for this workforce was automation they never saw. Instead of asking realtors to interact with an AI tool, I embedded description generation invisibly into the data pipeline — the system generates optimized descriptions automatically, without any realtor involvement. They appreciated the output but wanted nothing to do with the process. That failure directly shaped how the data pipeline’s AI descriptions work today: zero user interaction, zero adoption friction, 100% coverage.

What I’d do differently:

Infrastructure runbooks from day one. “How it works” is not the same document as “what to do when it doesn’t.” Both need to exist before you leave.

Build observability earlier. Operations teams do not trust systems they cannot see into. The data pipeline dashboard was built later than it should have been — and early opacity slowed trust-building with the operations team.

Formalize per-system handoff strategies earlier. The handoff table above represents thinking done retroactively. Each system needed a different approach from the beginning, not a uniform process applied at the end.

What I Chose Not to Do

I did not lobby for engineering headcount. Each system was designed so existing people — coordinators, receptionists, marketing teams, external agencies — could operate it. The brokerage did not need engineers. It needed systems that respected its actual org structure.

I did not optimize for personal velocity. I chose Astro over frameworks that would have been faster for me because Astro was more maintainable for the team that would inherit the work. I chose autonomous developers over ticket-executors because the brokerage needed people who could operate without me. I chose documentation that slowed every sprint but enabled every handoff.

Lessons and Reflections

The best handoff starts at architecture time, not departure time. Systems that survived cleanly had handoff embedded in their design — configurable dashboards, build-time validation, edge-deployed static files. The video render farm, where operational knowledge lived too much in my head, is the counterexample. The lesson is not “document everything” — it is “design systems that are documentable.”

Different systems need different organizational support. A static site, a configurable data pipeline, and an ephemeral render farm have fundamentally different operational profiles. Treating them with a uniform handoff strategy was a mistake I made early and corrected late. Each system’s complexity, operator skill level, and failure modes should dictate its own handoff approach.

Training is a product deliverable, not a project afterthought. The company-wide realtor training on structured data entry was the highest-leverage activity I ran for the data pipeline — higher than any engineering sprint. It improved the quality of input data across 2,000 listings. No amount of downstream automation compensates for garbage upstream.

SMB context is a design constraint, not a limitation. Eighty realtors with no engineering team is not a handicap — it is a forcing function for better design. Simpler architectures, lower operational surface, self-healing systems, documentation that assumes the reader is not a developer. The constraints I operated under at this brokerage produced systems that are more resilient than many I have seen at companies with dedicated platform teams.

The narrative is not about any count of systems. It is about building the organizational capability to own technology that creates business value. The systems are evidence. The capability is the product.